By Ray Burgess

For large-scale solar arrays, monitoring has been restricted to the inverter-level, sometimes sub-array and more recently, although still rarely, string-level. These solutions tend to suffer from a lack of data accuracy. The fact that they only aggregate sections of the array, these solutions, therefore, can only point to sections where there may be possible performance impairments. In all but catastrophic failures, there is not enough specificity to enable Operations & Maintenance (O&M) action with sufficient confidence of improved return. Options are now available to take monitoring down to the panel-level, giving a level of insight and accuracy never before available to large-site operators. With this new technology, specific issues can be isolated to the individual panel, identifying wiring faults, panel manufacturing defects, degradation beyond warranty levels, zonal soiling issues and even encroaching shade. For large-scale solar arrays, monitoring has been restricted to the inverter-level, sometimes sub-array and more recently, although still rarely, string-level. These solutions tend to suffer from a lack of data accuracy. The fact that they only aggregate sections of the array, these solutions, therefore, can only point to sections where there may be possible performance impairments. In all but catastrophic failures, there is not enough specificity to enable Operations & Maintenance (O&M) action with sufficient confidence of improved return. Options are now available to take monitoring down to the panel-level, giving a level of insight and accuracy never before available to large-site operators. With this new technology, specific issues can be isolated to the individual panel, identifying wiring faults, panel manufacturing defects, degradation beyond warranty levels, zonal soiling issues and even encroaching shade.

With current systems, whether monitoring occurs at the inverter, string, or panel-level, site managers are presented data. This can be data on the voltage, current and power at each monitored node, plus additional data from environmental sensors tracking irradiance, temperature, wind speed and direction. With the exception of basic threshold alarms, such as ‘inverter off line’ or ‘combiner box open circuit’, it takes time and experienced resources to make sense of what the data is saying about the health of the solar array and identify opportunities to improve output. The interesting dilemma is that increasing data granularity, down to the panel-level, brings the opportunity for maximum insight and performance management, yet the exponential increase in the amount of data creates a fear of data overload.

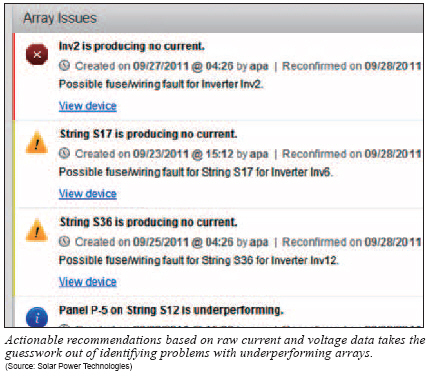

The challenge is to turn data into actionable information, by taking data with varying levels of specificity, accuracy and granularity, depending on the monitoring system, and turning it into a maintenance action plan that is very specific. Data should be tested against defined business rules to ensure any O&M intervention is justified by its return and is consistent with the contractual commitments for the site.

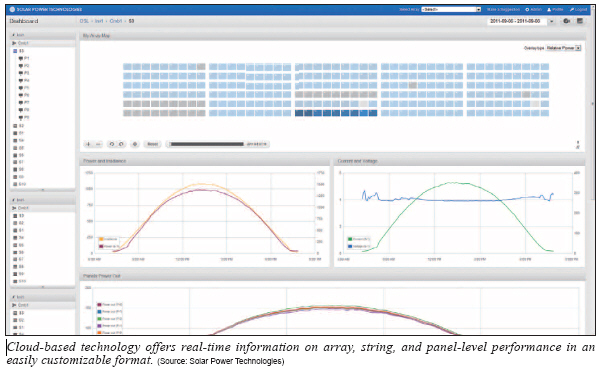

The solution is to use the power of cloud-based computing to provide the raw data analysis, perform array diagnostics and determine O&M action based on user defined business rules. Basically, large-scale arrays become ‘intelligent’. They will inform the O&M team how, where and when to intervene to keep the array at optimal performance.

Shortcomings with Current Monitoring Systems

In large-scale systems, monitoring started at the utility meter and inverter. The utility meter was the arbiter of what really got produced, who got paid and how much, so monitoring at that point is essential. The inverter is the point of central control and has traditionally been viewed as the weak link in reliability, so inverter manufacturers have provided increasing levels of sophisticated monitoring capability as a part of enabling preventive maintenance and rapid fault diagnosis to minimize down-time. However, in a new system, up to 20% of the energy captured by the PV panels is lost before it gets to the inverter and this ‘loss’ widens over time due to degradation, soiling and miscellaneous faults.

Monitoring systems have improved, but still fall short of providing the insight necessary for effective performance management, especially in large commercial and utility-scale arrays.

Granularity and Accuracy

Most of the energy loss in solar arrays is due to faults and impairments within panels and the interaction of those panel impairments within and between strings. A single panel fault can be multiplied by 2 to 10 times, in terms of array energy loss, depending on the type of fault. Inverter, sub-array and even string monitoring systems do not see most of these losses. They aggregate the array into large sections of panels, and assuming that most panel faults are evenly distributed across an array, they will rarely identify faults and almost never pinpoint them such that action can be taken. Panel-level monitoring solves this granularity problem, and studies have shown that the savings from identifying defective panels alone fully pays for these systems. However, panel-level data carries a fear of data overload and inability to manage such insight.

Most inverter, sub-array and string-level monitoring systems use sensing technology that limits their measurement accuracy to plus or minus 5%. This might be okay for sensing large catastrophic faults such as open circuits, blown fuses and dead strings, but is insufficient when trying to identify array impairments, most of which occur at the panel-level, especially when coupled with the lack of granularity. For instance, how do you monitor panel Original Equipment Manufacturers (OEMs) against their 0.7% per annum degradation warranty when the monitoring tool has a plus or minus 5% measurement accuracy and is looking at panels in aggregates of tens or hundreds? New panel-level monitoring technology resolves this issue with 0.5% measurement accuracy and panel-level granularity. However, with a 10 MW site containing 40,000 panels being monitored in real time, it is impossible for an O&M team to manually deal with such vast amounts of data and convert it to a maintenance plan.

Data Relevance

Monitoring systems have been designed to provide measured data. There are multiple systems on the market, but on the whole they present raw data, organized in some visual format that corresponds to the asset being monitored─for instance, the bus voltage at the inverter and the string current passing through each combiner box, or at each string. The more nodes being monitored, the more numbers are presented on screen, or multiple screens. Thus the more insight required, the more complex the presentation, quickly leading to a system that is not usable, even by experienced O&M teams.

Some systems color code the data if it is out of a predefined range. For example, yellow if a string is more than 10% below the average, or red if it’s 20% or more. This points to a section of the array that has a problem, but there is little or no insight into the nature of the problem. It requires a site visit and some diagnostics using multimeters to try to locate the source of the problem and diagnose potential remedies.

Scalability

As mentioned above, monitoring at the panel-level in a 10 MW array would require upwards of 40,000 monitoring points. Until now, the physical challenge of collecting data from this many nodes in real time was impractical. This is being resolved with new innovative wireless mesh networks, and should no longer be a barrier to scaling high granularity monitoring systems.

The biggest scalability challenge has been mentioned several times before. It is data overload and the challenge of using experienced and expensive labor to sift through masses of data to draw out actionable information. The stated experience of a major Engineering, Procurement and Construction company (EPC) who has installed micro-inverter systems up to 25 kW in size, is that they need an experienced technician reviewing charts for four hours per week to understand how the system is performing. Scale this to panel-level monitoring in a 10 MW site, and it’s obvious that it quickly becomes impractical. The cost of incremental experienced personnel quickly overcomes the potential benefits of improved energy performance.

Benefits of Using Cloud-Based Intelligence

At the beginning of the article, we asked the question ‘is monitoring really being used to operate and maintain the asset at peak financial performance over its active life?’ In reviewing the shortcomings of current monitoring solutions, it would be hard to argue that there is currently an effective tool to accomplish this task. This is perhaps part of the reason why a 10 MW array is built out to 12 MW and then managed by an O&M partner to produce 9 MW.

With the emergence of panel-level monitoring, there is a new solution with the granularity and accuracy that will provide sufficient insight to identify and diagnose all array impairments. This gives the raw data on which to build the array performance management system for the future. The challenge, as stated above, is to convert this wealth of data into valuable information, which can improve ROI.

This is where cloud-based computing comes into place and the ability to build a customizable intelligent analysis and diagnostics system that eliminates the data overload fear and empowers O&M teams. The ability to make large-scale arrays─Intelligence.

Insight

The data from a very large-scale array, being monitored every second at the panel-level, poses no challenge to today’s networking and computer technology. The richer the data set, the more valuable the information that can be derived from it.

A suite of analysis tools runs continuously on the data, comparing panels, strings and sub-array zones to each other, to reference levels and historical levels. They look at absolute power levels, normalized levels based on environmental data, and analyze the voltage and current levels that contribute to the power being generated. The tools are designed to recognize impairments using pre-defined models. They can differentiate between damaged panels, degraded panels, shaded panels or simple soiling. They can pinpoint wiring faults, dangerous intermittent faults, or simply blown string fuses.

Rules-Based Decision-Making

The concept of the ‘Intelligent Array’ tells array owners what needs to be done to improve the array output. The intelligence comes not just from the diagnostics capability, but also from informed decisions that the system makes, based on what needs to be done to improve ROI and/or to stay in compliance with contractual commitments in terms of energy harvest. To enable this, the site operator and O&M organization input financial criteria and set thresholds and rules regarding alerts and recommendations. These include the cost of materials, such as panels, the cost of labor for each common repair, the warranty conditions for all major assets in the array, and contractual commitments, such as minimum power ratio or normalized energy output levels. The power loss for each impairment is then automatically calculated and recommendations are made by the system for specific targeted maintenance action that is consistent with the financial rules defined. In combination with impairment diagnosis, this ROI-based decision-making dramatically reduces the resource needed to aggressively manage a large-scale solar asset. However, for those inclined to second guess the system, the Cloud-based ‘Intelligent Array’ contains a full analysis trail for every recommendation, including detailed engineering charts that support the initial diagnosis, as well as the financial return calculations that justified the action.

Access and Ease of Use

The Cloud is available everywhere at any time, with multiple users able to interact with the data for any one site at any time. Site management can be analyzing month-to-date performance, or reviewing the supporting data for a warranty claim with a panel manufacturer. Meanwhile, O&M crews can be working the day’s maintenance schedule with mobile devices tracking them to the precise locations of defined impairments, already equipped with the materials needed to resolve the issue.

Information can be tailored to specific users, with access control lists defining privileges for each user. For instance, strict control can be kept on access to the financial rules database. Different rule sets can be managed by the site owner and the O&M provider, such that they can each manage to their specific commitments. For instance, the O&M provider could set rules that enable him to comply with his 95% performance ratio commitment, whereas the owner can review compliance to PPA commitments, or analyze response times of the O&M crew.

Finally, the beauty of Cloud-based computing is that there are no requirements for complex on-site hardware and no worries about maintaining and upgrading software.

Large-scale solar arrays are complex assets which need to be aggressively managed if the objective is to maximize ROI. Advanced panel-level monitoring systems are a powerful new tool in array performance management. However, the amount of data that will come from such systems outstrip current monitoring visualization tools and thus the ability of O&M personnel to analyze the output and define actions. The solution is to use a suite of cloud-based intelligent analysis and diagnostics tools that can feed on the data, converting it to precise and actionable information for site management and the O&M team, based on defined business rules. The result is that arrays become ‘intelligent’. They optimize themselves where possible and then inform the operators exactly how, where and when intervention is required to maintain the array at the desired performance for maximum ROI.

Ray Burgess joined the Solar Power Technologies team as President and CEO in July 2009. He has over 30 years of leadership experience in the technology industry, spanning semiconductors, software and micro-mechanical systems. (www.spowertech.com)

For more information, please send your e-mails to pved@infothe.com.

ⓒ2011 www.interpv.net All rights reserved. |